The RIoT Secure Model¶

From Principles to System¶

Throughout the preceding chapters, a set of themes has emerged.

Constraints at the edge are not temporary limitations, but defining characteristics of the environment. Systems must operate with limited resources, intermittent connectivity, and long operational lifetimes.

They must be secure, adaptable, and manageable over time — not only at the moment of deployment, but throughout their entire lifecycle.

Individually, these observations point to specific challenges. Together, they describe a different way of thinking about how Edge systems should be designed.

At the core of this shift is a simple idea.

That communication, security, execution, and lifecycle management are not separate concerns to be addressed independently, but interdependent elements of a single system. Each influences the others, and each must be designed with the same constraints in mind.

When treated in isolation, these concerns introduce complexity.

When considered together, they define structure. This structure does not emerge by layering additional functionality onto existing designs.

It requires a different starting point — one that assumes constraint, rather than attempting to abstract it away. One that treats lifecycle not as an extension, but as a fundamental property of the system. One that separates responsibilities not only logically, but physically, where necessary.

Over time, these principles begin to take shape.

What starts as a set of architectural considerations evolves into something more cohesive. Patterns repeat. Boundaries become clearer. Interactions become more predictable. The system becomes easier to reason about — not because it is simpler, but because it is structured.

This is the point at which principles become implementation.

Not as a direct translation, but as a system designed around the realities they describe. A system where communication is efficient and secure by design, where execution environments are isolated and controlled, and where lifecycle management is embedded rather than appended.

The result is not a collection of independent components — it is a platform.

A platform that reflects the constraints of the Edge, rather than attempting to hide them. One that enables devices to operate independently, while remaining part of a controlled and observable system. One that allows application logic to evolve without compromising security or stability.

This is the foundation upon which RIoT Secure is built. Not as a response to a single problem, but as the outcome of addressing many, consistently and in relation to one another.

What begins as a set of constraints ultimately defines the system itself.

Architecture at a Glance¶

The platform is structured as a set of clearly defined layers, each responsible for a specific aspect of how Edge systems communicate, operate, and evolve over time.

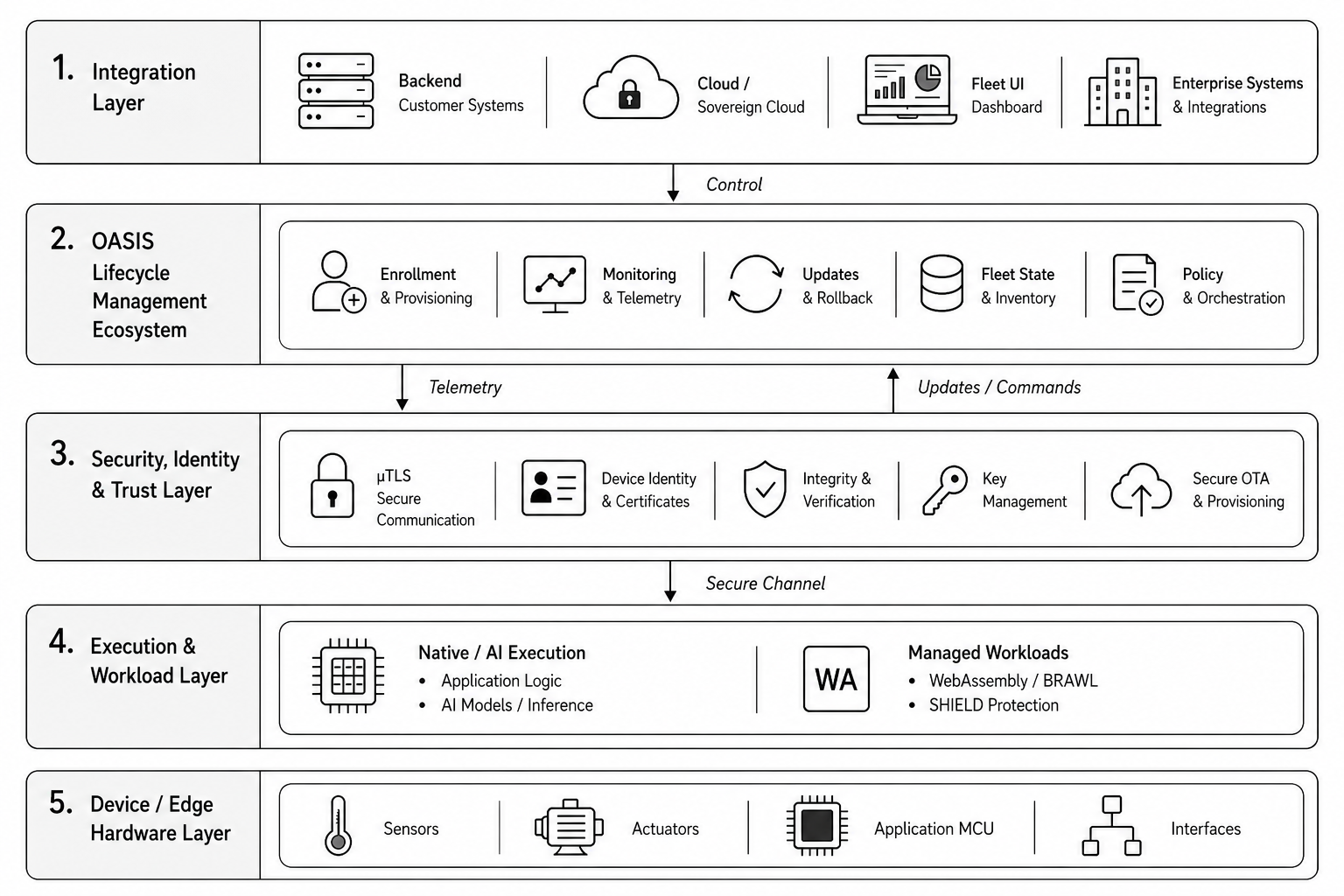

Figure 14: Full-Stack RIoT Secure Architecture

At the foundation of the system is the device itself — sensors, actuators, interfaces, and the application microcontroller responsible for interacting with the physical environment. This is where data is collected, decisions are executed, and operational behavior is realized.

Above this sits the execution layer — within this domain, application logic, AI inference, and evolving workflows are able to operate independently from the communication and lifecycle infrastructure beneath them.

Depending on the requirements of the deployment, this execution can take place either through native application environments or through a controlled WebAssembly runtime using BRAWL and SHIELD.

This separation is intentional.

Communication, security, identity, and lifecycle operations are handled independently through a dedicated control domain. Within this layer, µTLS establishes secure and efficient communication, while identity, provisioning, attestation, and update mechanisms provide the foundation required to manage systems continuously over time.

Above these layers, OASIS provides the lifecycle control plane.

Devices are enrolled, monitored, updated, governed and eventually decommissioned through a consistent operational model that maintains visibility into the state of the system throughout its lifecycle. Rather than treating the lifecycle as a collection of isolated functions, the platform approaches it as a continuous process integrated into the structure of the system itself.

At the integration layer, the platform connects with existing operational environments.

Customer backends, cloud platforms, dashboards, and enterprise systems remain part of the broader ecosystem, allowing lifecycle management and secure communication to be introduced without requiring existing infrastructure to be replaced.

Taken together, these layers form a cohesive system.

Each part is designed to operate within the constraints of the Edge while remaining aligned with the others. Communication, execution, security, and lifecycle management are not treated as separate capabilities added over time, but as interconnected elements of the same architectural model.

The result is a platform designed not only to deploy systems at the Edge, but to sustain and evolve them over time.

Core Platform Components¶

The platform is composed of several core components, each addressing a specific aspect of communication, execution, security, or lifecycle management at the Edge.

While each component can be understood independently, they are designed to operate together as part of a cohesive system structured around constraint, control, and long-term evolution.

This distinction is important.

The platform is not built around isolated features introduced to solve individual problems in separation. Instead, each component reflects a specific architectural responsibility within the broader system. Communication, lifecycle management, execution, and protection are treated as interconnected domains, designed to support one another rather than operate independently.

| Component | Purpose | Role Within the Platform |

|---|---|---|

| µTLS | Secure constrained communication | Provides efficient, secure communication designed specifically for resource-constrained Edge environments |

| FUSION | Hardware separation model | Separates application execution from communication, security, and lifecycle operations through dedicated execution domains |

| OASIS | Lifecycle control plane | Manages enrollment, monitoring, updates, rollback, fleet state, provisioning, and operational lifecycle processes |

| BRAWL | WebAssembly runtime environment | Enables portable and evolving workloads to execute safely within a controlled Edge runtime |

| SHIELD | Runtime protection layer | Protects deployed workloads, application logic, and execution integrity within the software-defined execution environment |

The behavior of the system emerges from how these components interact.

µTLS establishes the communication foundation through which devices securely participate in the system. FUSION defines architectural separation between execution and control domains. OASIS maintains lifecycle visibility and operational governance across distributed deployments. BRAWL and SHIELD extend this model into software-defined execution, enabling workloads to evolve while remaining controlled and protected over time.

Taken together, these components form the operational structure of the platform.

Each addresses a distinct requirement of operating at the Edge, while remaining aligned with the same underlying principles of efficiency, separation, security, and lifecycle continuity.

A Platform Designed for Constraint¶

Many systems are designed under the assumption that resources are abundant.

Processing power can be scaled. Memory can be increased. Connectivity is expected to be stable and continuous. In these environments, complexity is often managed through abstraction, and constraints are addressed by adding capacity rather than reconsidering structure.

At the edge, these assumptions do not hold.

Devices operate within fixed boundaries. Memory, processing, and power are limited. Connectivity may be intermittent or unreliable. Systems are expected to function not only under ideal conditions, but in environments where failure is a possibility rather than an exception.

Designing for this environment requires a different approach.

Constraints cannot be deferred or hidden behind layers of abstraction. They must be addressed directly, shaping how systems are structured from the outset. Efficiency becomes a requirement rather than an optimization. Predictability becomes more important than flexibility. Independence becomes necessary for resilience.

This perspective defines the foundation of the platform.

Rather than adapting existing models to constrained environments, the system is built with those constraints as its starting point. Communication is designed to operate within limited bandwidth. Security is implemented in a way that respects resource limitations. Execution environments are structured to avoid contention and unpredictability.

This approach extends beyond individual components.

It influences how the system behaves as a whole. Devices are able to operate independently when connectivity is unavailable, and to rejoin the system without disruption when it is restored. Interactions are designed to be efficient, predictable, and verifiable, even under changing conditions.

Over time, this results in a system that is not only functional within constrained environments, but aligned with them. One that does not rely on ideal conditions to operate correctly, and does not degrade unexpectedly when those conditions change. One that is capable of sustaining long-term operation without requiring continuous intervention or redesign.

This is the context in which the platform was developed. Not as an adaptation of existing approaches, but as a system shaped by the realities of the environments in which it is intended to operate.

The system is not optimized for constraint — it is defined by it.

Communication as a Foundation¶

In distributed systems, communication is often treated as an assumed capability.

Data is exchanged between devices and backend systems using established protocols, with security layered on through standard mechanisms. In many environments, this approach is sufficient, supported by reliable connectivity and hardware capable of handling the associated overhead.

At the edge, the conditions are different.

Devices operate with limited bandwidth, constrained processing power, and variable connectivity. Under these conditions, communication cannot be treated as a secondary concern. It must be efficient, predictable, and secure — without introducing unnecessary complexity or resource overhead.

This is where the role of communication changes. It is no longer simply a means of transferring data. It becomes a foundational element of the system, influencing how devices establish trust, how they interact, and how they are managed over time.

Within the platform, this foundation is defined through µTLS.

Rather than adapting existing protocols designed for more capable environments, µTLS is structured specifically for constrained systems. It preserves the essential properties of secure communication — confidentiality, integrity, and authentication — while removing assumptions that do not hold at the edge.

This approach is not incremental — it represents a rethinking of how secure communication can be implemented under constraint. In practical terms, this has a measurable impact. By reducing protocol overhead and eliminating unnecessary complexity, µTLS significantly lowers the amount of data required for secure communication — by as much as ninety-five percent compared to traditional approaches such as HTTPS or MQTT over TLS.

This efficiency is not achieved by weakening security, but by redesigning how it is applied within constrained environments.

This efficiency extends beyond performance.

Lower transmission overhead makes communication more viable across limited or costly networks. It reduces power consumption, improves responsiveness, and enables more frequent interaction without increasing operational burden. Systems are able to maintain secure communication as a consistent behavior, rather than a compromise between capability and constraint.

Importantly, this foundation extends across the lifecycle.

From initial provisioning and identity establishment, through ongoing operation and updates, communication remains consistent in how it is secured and managed. Devices are able to interact with the system using the same underlying model, regardless of their state or role within the deployment.

In this way, communication is not introduced as an additional layer — it is embedded within the structure of the system itself, forming the basis upon which other capabilities are built.

This approach to secure communication is formalized within RIoT Secure’s patented (US 11,997,165 B2) µTLS protocol, reflecting a design that is both efficient in practice and grounded in first principles.

Architectural Separation in Practice¶

The concept of separating concerns is well understood in software design.

Systems are structured to isolate functionality, reduce interdependencies, and improve maintainability. In many cases, this separation is achieved through layers, abstractions, and modular components that coexist within a shared execution environment.

At the edge, this approach has limitations.

When multiple concerns — application logic, communication, security, and lifecycle management — are combined within a single execution context, they remain inherently coupled. They compete for the same resources, share the same memory space, and are subject to the same constraints. Changes in one area can affect others in ways that are difficult to predict and control.

This is where separation takes on a different meaning. Rather than being implemented solely through software structure, it is enforced through architecture.

Within the platform, this is achieved through FUSION. Instead of combining all responsibilities within a single microcontroller, the system is divided across dedicated components. Application logic executes within its own environment, while communication, security, and lifecycle management are handled independently.

This separation is not simulated — it is physical.

In practical terms, this means that each domain operates within its own set of constraints. The application environment can be optimized for performance, determinism, or specialized processing such as AI, without being influenced by communication overhead or lifecycle operations.

At the same time, the communication and control layer can evolve independently, adapting to new requirements without impacting application behavior.

This independence has several effects. It reduces contention for resources. It limits the scope of failure. It allows each part of the system to be developed, updated, and maintained without introducing unintended side effects in others. More importantly, it creates clear boundaries — these boundaries define how the system behaves under change.

Updates to communication or security can be introduced without altering application logic. Application behavior can evolve without compromising device identity or connectivity. Failures can be contained within a specific domain, rather than propagating across the entire system.

Over time, this leads to a system that is easier to reason about.

Not because it is simpler in terms of capability, but because its structure is explicit. Responsibilities are clearly defined, interactions are controlled, and the impact of change is more predictable. This is the practical realization of separation of concerns at the edge. Not as a design pattern applied within a single system, but as an architectural principle that defines how the system is constructed.

Separation of concerns is not achieved through abstraction — it is defined by architecture.

Lifecycle as a First-Class Capability¶

As systems move beyond individual devices and into distributed deployments, the nature of management changes.

What was once handled locally must now be coordinated across many devices, often operating in different environments and under varying conditions. Visibility becomes more important. Control becomes more complex. The system must be understood not only at the level of individual components, but as a whole.

In many cases, lifecycle management is introduced incrementally.

Monitoring is added to observe behavior. Update mechanisms are implemented to address changes. Configuration tools are developed to manage variation across deployments.

Each capability addresses a specific need, but they are often implemented separately, without a unified model of how the system evolves over time.

This leads to fragmentation.

Different aspects of the system are managed through different tools, with limited coordination between them. Processes become manual, or partially automated. The state of the system is distributed across multiple interfaces, making it harder to maintain a consistent view of what is deployed, what is changing, and what requires attention.

The operational visibility created through this process also establishes a consistent record throughout the lifecycle of the system. Device identity, workload versions, update history, and system state can be observed and verified over time, supporting environments where traceability, operational integrity, and long-term accountability are increasingly important.

Within the platform, lifecycle management is treated differently.

Rather than being introduced as a set of independent capabilities, it is defined as a core function of the system. Devices are not only connected — they are represented, managed, and governed through a consistent model that reflects their state, configuration, and behavior over time.

This model is expressed through OASIS.

It provides a control plane through which devices are provisioned, operated, updated, and observed as part of a continuous process. From initial onboarding to ongoing operation and eventual decommissioning, each stage of the lifecycle is handled within the same framework.

This consistency changes how systems are managed.

Updates can be coordinated across large deployments without requiring direct access to individual devices. Configuration can be applied in a structured and repeatable way. The state of the system can be observed in real time, allowing issues to be identified and addressed more quickly.

At the same time, this capability is designed to remain unobtrusive.

It does not impose a specific way of working, nor does it require existing systems to be replaced. Instead, it integrates with them, exposing lifecycle functionality through defined interfaces that can be incorporated into broader workflows.

This approach reduces operational complexity. Not by removing functionality, but by unifying it. Lifecycle management becomes a continuous, predictable process, rather than a collection of isolated tasks performed in response to change. In this way, lifecycle is no longer something that happens after deployment. It is an inherent property of the system — present from the beginning, and sustained throughout its operation.

Control is not established at deployment — it is maintained throughout the lifecycle.

Evolving Workflows at the Edge¶

As Edge systems mature, the way they evolve begins to change.

In earlier models, device behavior was largely fixed at the time of deployment. Firmware defined how the system operated, and changes required updates to that firmware — introduced carefully and often infrequently, due to the complexity and risk involved.

This model remains valid, particularly in systems where determinism and control are essential.

At the same time, new requirements are emerging. AI-driven behavior, data-dependent decision-making, and dynamic operational conditions introduce a need for systems to adapt more continuously.

The challenge is not only to update devices, but to evolve how they behave over time — without increasing complexity or compromising stability.

This is where the concept of evolving workflows becomes relevant. Rather than treating all changes as firmware updates, the system can be structured to allow certain aspects of behavior to evolve independently.

This includes decision logic, data processing steps, and higher-level control flows that define how the system responds to its environment.

As AI capabilities move increasingly toward the Edge, these workflows begin to take on the characteristics of managed operational assets rather than static firmware components.

Models, inference logic, and decision pathways may evolve independently over time, requiring the same considerations applied to any continuously managed system — versioning, controlled deployment, monitoring, rollback, and lifecycle governance.

Within the platform, this is achieved by separating these workflows from the underlying device foundation. The device itself remains stable — handling communication, security, and lifecycle management — while the workflows that define its behavior can be introduced, updated, or refined independently.

This allows systems to adapt more frequently, without requiring full firmware replacement or tightly coupling evolving AI behavior to the underlying device firmware itself.

This model supports both present and emerging approaches.

For many deployments, native execution on a dedicated microcontroller remains the primary method of implementing functionality, providing the performance and determinism required for real-time operation.

At the same time, the system is designed to support more portable execution models, where workflows can be defined, delivered, and updated in a standardized form. This is where WebAssembly becomes relevant.

Not as a replacement for native execution, but as an extension of the model — providing a way to represent evolving workflows in a portable, secure, and efficient format.

As requirements change, and as systems move toward more dynamic behavior, this capability allows the platform to adapt without requiring a fundamental shift in architecture.

In this way, the system supports both stability and change. The foundation remains consistent, while the workflows built upon it can evolve over time — allowing Edge systems to improve continuously, without compromising the characteristics that define them.

Evolution at the edge is not about replacing systems, but extending them.

Protecting What Runs¶

Security at the edge is often defined by how systems communicate and how devices are isolated.

Encryption protects data in transit. Hardware boundaries limit exposure between components. These mechanisms are essential, and they form the basis of secure system design in constrained environments. They do not, however, address all aspects of risk.

Once application logic is deployed to a device, it becomes part of the execution environment.

In traditional embedded systems, this logic is compiled directly into firmware. While this provides performance and control, it also means that, once deployed, the code exists in a form that can be accessed, inspected, or extracted — particularly in environments where physical access to devices cannot be fully prevented.

Historically, addressing this challenge has required specialized hardware.

Secure elements, protected memory regions, and vendor-specific features can be used to limit access to firmware. While effective, these approaches depend on specific hardware capabilities and are not consistently available across all platforms. They also introduce additional complexity, both in design and in deployment.

As systems evolve to support more dynamic execution models, a different approach becomes possible.

When application logic is no longer bound directly to static firmware, but instead executed within a controlled runtime environment, the point at which protection is applied can shift. Rather than relying solely on hardware constraints, protection can be introduced at the level of execution itself.

Within the platform, this is achieved through SHIELD.

Operating in conjunction with the WebAssembly-based execution model, SHIELD protects application logic as it is delivered, stored, and executed on the device. Firmware is no longer exposed in a directly accessible form, and attempts to inspect or modify it are constrained by the execution environment in which it runs.

Because workloads are introduced through a controlled execution environment, the system is also able to maintain confidence in what has been deployed, how it is versioned, and whether execution remains aligned with the expected operational model over time. This becomes increasingly important as Edge systems evolve from static firmware deployments into continuously managed execution environments.

This capability depends on the same separation that enables evolving workflows.

By decoupling application logic from the underlying firmware, and executing it within a defined runtime, the system creates a boundary within which protection can be applied consistently.

This is not possible in the same way when logic is compiled directly into native firmware, where execution and representation are tightly bound to the hardware.

The result is an additional layer of security.

One that complements communication security and hardware isolation, rather than replacing them. It extends protection into the execution phase, addressing scenarios where physical access is a realistic threat and where intellectual property must be preserved over time.

In this way, protection evolves alongside the system. As workflows become more dynamic and more portable, the mechanisms used to secure them adapt as well — ensuring that the ability to evolve does not introduce new forms of exposure.

Security is no longer only about where code resides, but how it runs.

A Cohesive System¶

Individually, each component of the platform addresses a specific aspect of operating at the edge.

Communication is optimized for constrained environments. Execution is structured to separate responsibilities. Lifecycle management provides control over distributed systems. Workflows are able to evolve independently of the underlying device. Protection extends into the execution environment itself.

Each of these capabilities responds to a distinct challenge.

Taken together, they define something more — not a collection of features, but a system in which each part supports the others.

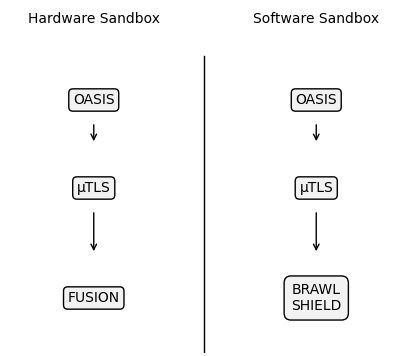

Figure 15: Operational Models: Hardware Sandbox vs Software Sandbox

Communication enables lifecycle control, lifecycle management governs how systems evolve, separation of concerns defines where responsibilities reside, execution models determine how behavior is introduced and updated, and protection ensures that what is deployed remains secure over time.

This interdependence is intentional — the platform is not constructed by layering independent solutions, but by aligning them around a shared model. Each component is designed with the same constraints in mind, and with an understanding of how it will interact with the others.

Within this structure, the system supports more than one operational model.

For environments where determinism and direct control are required, the platform can be realized through a hardware-based model, combining secure communication, lifecycle management, and a dedicated execution environment. In this form, responsibilities are physically separated, and application logic runs natively within its own domain.

At the same time, the system supports a software-defined model, where execution is handled within a controlled runtime environment. In this case, workflows can be delivered, updated, and protected independently, allowing behavior to evolve more dynamically over time.

These models are not mutually exclusive — although these models differ in how execution is realized, they are complementary rather than competing approaches.

At the hardware level, FUSION establishes clear architectural boundaries between application execution and the communication, security, and lifecycle domains responsible for maintaining control over the system. Within the software-defined model, BRAWL and SHIELD extend the same principle into the execution environment itself, allowing workloads and evolving workflows to operate within a controlled runtime boundary.

Hardware separation protects the system boundary. Software sandboxing governs how workloads behave within it.

This flexibility allows the platform to support both present and emerging requirements. Systems that depend on native execution and hardware-level control can operate within a stable, deterministic model.

Systems that require more dynamic behavior can adopt a software-defined approach, without requiring a fundamental change in architecture. As a result, the system behaves consistently.

Devices are able to operate independently, while remaining part of a managed environment. Changes can be introduced without disrupting stability. Security is maintained across communication, execution, and lifecycle.

The system evolves without losing control.

In practice, this reduces complexity. Not by limiting capability, but by structuring it. The boundaries between components are clear. The responsibilities of each part are defined. Interactions follow predictable patterns, allowing the system to scale without becoming fragmented. In this way, the platform supports both stability and change.

It provides a foundation that remains consistent over time, while allowing the systems built upon it to adapt as requirements evolve.

In this way, RIoT Secure establishes a secure lifecycle control plane and trusted execution foundation for Edge systems. Communication, execution, workload isolation, and lifecycle management are treated as interconnected architectural responsibilities rather than isolated capabilities introduced independently over time.

Hardware separation defines the system boundary. Software sandboxing governs how workloads evolve within it. Lifecycle management maintains visibility and control across the operational lifetime of the system.

Together, these elements allow Edge systems to evolve continuously while remaining secure, observable, and operationally manageable under real-world conditions.

Integration, Not Replacement¶

Introducing a new system into an existing environment is rarely a purely technical decision.

Beyond functionality, it raises questions of compatibility, disruption, and control. Existing platforms, workflows, and investments must be preserved, even as new capabilities are introduced.

The cost of change is not only measured in implementation effort, but in the impact it has on systems that are already in operation.

In many cases, this creates resistance.

Solutions that require fundamental changes to architecture, tooling, or operational processes can be difficult to adopt, regardless of their technical merit. The more a system demands replacement, the more friction it introduces.

The platform is designed with this in mind.

Rather than replacing existing systems, it integrates with them. Communication is structured in a way that allows interaction with established protocols and infrastructures. Lifecycle management can be accessed through defined interfaces, allowing it to operate alongside existing tools and platforms.

This approach preserves continuity, allowing organizations to maintain their current workflows, visualization layers, and business logic, while introducing lifecycle control, secure communication, and structured execution where needed.

The system becomes an extension of what already exists, rather than an alternative to it. This is particularly important in environments where multiple systems must coexist.

Devices may interact with cloud platforms, analytics systems, or operational dashboards that are already deeply integrated into the organization. Replacing these systems is neither practical nor desirable. Instead, the value lies in enhancing how devices are managed, secured, and evolved within that ecosystem.

At the same time, integration does not imply limitation.

The platform provides a consistent model for communication, lifecycle, and execution, allowing it to operate independently when required, or as part of a broader system when integrated. This flexibility ensures that adoption can be incremental, rather than all-or-nothing. Over time, this reduces the barrier to adoption.

Capabilities can be introduced where they provide immediate value, without requiring a complete redesign of the system. As requirements evolve, the platform can take on a larger role, extending its influence without disrupting existing operations.

In this way, the system aligns with how organizations work in practice. Not by replacing what is already in place, but by strengthening it.

Proven in Practice¶

The concepts described throughout this chapter are not theoretical.

They have been applied in environments where reliability, security, and long-term operation are essential. In these contexts, systems are expected to function consistently over time, under conditions where failure is not easily tolerated and where access to devices is inherently limited.

One such environment is found within airport operations.

Here, systems must operate within a tightly controlled security framework, interacting with infrastructure that demands both stability and accountability.

Devices are deployed across distributed locations, often with limited physical access, and must continue to perform as expected while adapting to evolving operational requirements.

Within this context, the platform has been applied to support lifecycle management as a continuous process.

Initial deployments began as controlled pilots, where systems were introduced incrementally and evaluated under real-world conditions. Over time, these deployments expanded, transitioning into sustained commercial operation, where devices are managed, updated, and monitored as part of daily activity.

This progression reflects the model described throughout this work.

Systems are not deployed once and left unchanged. They are provisioned, observed, updated, and adapted over time, with each stage managed within a consistent framework. Changes can be introduced without disruption, and devices remain aligned with operational requirements as those requirements evolve.

Over an extended period of operation, this approach has demonstrated its value.

Devices remain under control without requiring continuous manual intervention. Updates can be applied securely and predictably. The system maintains visibility into its state, allowing issues to be identified and addressed in a timely manner.

At the same time, the underlying architecture continues to support both stability and change. This is particularly significant in environments where security and reliability are closely linked.

The ability to manage devices throughout their lifecycle — while maintaining clear boundaries between execution, communication, and control — ensures that systems can operate within strict requirements without becoming rigid or difficult to adapt.

In this way, the platform moves beyond concept, becoming part of operational reality, supporting systems that must function continuously, evolve over time, and remain aligned with the environments in which they operate.